In technical interviews, roughly 30 to 40% of problems can be solved—or significantly optimized—using arrays and hashing. These structures test a core engineering skill: the ability to trade memory for speed. By mastering these two concepts, you learn to transform slow, brute-force solutions (O(n²)) into efficient, linear-time algorithms (O(n)).

The Array: Contiguous Memory and Random Access

An array is the simplest data structure. It stores elements in contiguous memory locations, which allows for O(1) random access. If you know the index, you can retrieve the value instantly.

However, arrays have limitations. Inserting or deleting elements from the middle requires shifting all subsequent elements, leading to O(n) time complexity for these operations. Understanding this tradeoff is crucial when choosing between arrays and other structures like Linked Lists.

The Power of Hashing

Hashing is an optimization mindset. It involves using a Hash Function to map data of arbitrary size to a fixed-size value (a hash). This hash then acts as an index in an underlying array, allowing for near-instant retrieval.

Key Hash-Based Structures

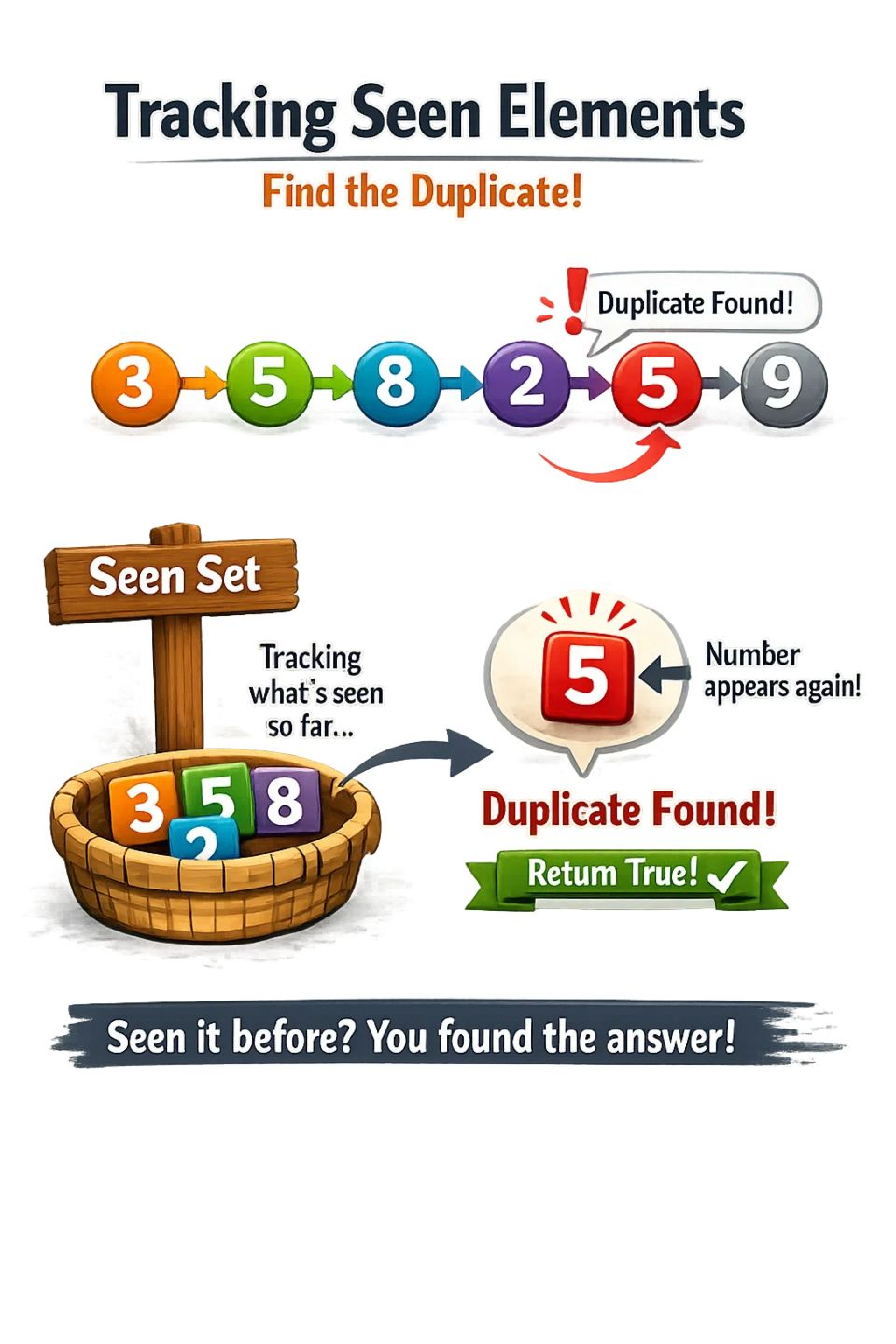

- HashSet: Used when the only concern is existence. It stores unique elements and answers the question: "Have I seen this before?" in O(1) average time.

- HashMap: Used when you need to maintain relationships. It stores Key-Value pairs (e.g., mapping a value to its frequency or an element to its index).

Collision Resolution

When two different keys produce the same hash, a collision occurs. Professional-grade implementations handle this via Chaining (using linked lists at each index) or Open Addressing (searching for the next available slot). In interviews, we generally assume a good hash function that maintains O(1) average time for operations.

Strategic Patterns to Master

Beyond simple lookups, hashing enables several powerful problem-solving patterns:

1. Complement Lookup

In problems like "Two Sum," instead of Checking every pair (O(n²)), we iterate through the array once. For each element, we calculate its complement (target - current) and check if that complement exists in our HashMap. This reduces the complexity to O(n).

2. Frequency Counting

By storing elements as keys and their counts as values, we can solve problems related to anagrams, duplicates, and top-k frequent elements in linear time.

3. Grouping by Key

Hashing allows us to categorize data efficiently. For example, to group anagrams, we can use a sorted version of the string or a character frequency count as the hash key, mapping it to a list of original strings.

The Golden Rule

Every engineer eventually learns a simple heuristic: When things get hard, throw a HashMap at it. If your solution involves repeated searching or nested scans, there is a high probability that a hash-based structure can optimize it to constant time lookups.

Hashing is more than a data structure; it is a way of thinking about data relationships. Once you master the "lookup mindset," technical interviews stop feeling like riddles and start feeling like predictable patterns.